All articles

Shadow AI Exposure Forces Enterprises to Establish Auditable AI Pathways

Ronaldo Andrade, CISO at Ivy Group S/A, explains why Shadow AI represents a structural accountability failure, not a user behavior problem.

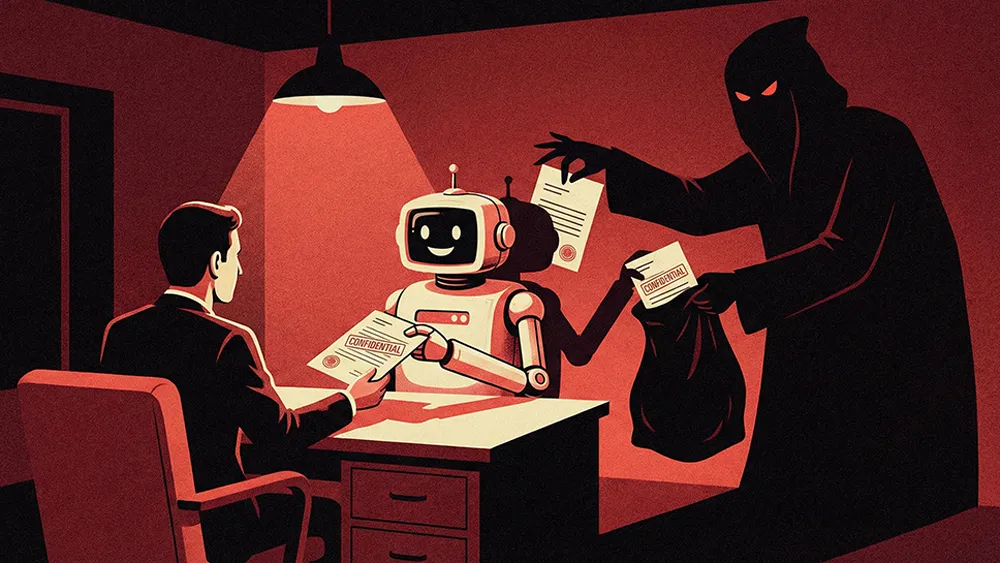

The most underestimated risk is the silent and progressive leakage of information through everyday AI prompts. Unlike phishing or ransomware, there's no alert. But over time, you lose control of strategic and sensitive data.

Employees across every function are already using AI to draft content, analyze data, generate code, and accelerate decisions—most without formal approval, oversight, or guardrails. The security models designed to catch this activity are largely built for threats that announce themselves. Shadow AI, however, does not. It leaks strategic and personal data in fragments, through everyday prompts, with no alerts and no incident triggers. The exposure compounds quietly, and by the time it becomes visible, the damage is already distributed across systems and third-party ecosystems.

Ronaldo Andrade is the CISO at Ivy Group S/A, a Brazilian technology holding company with business units spanning cybersecurity, software development, and R&D. Andrade brings over 20 years of cybersecurity and risk advisory experience across regulated industries in the U.S. and Latin America. He believes effective AI governance is less about restriction and more about building auditable, owned pathways for use.

"The most underestimated risk is the silent and progressive leakage of information through everyday AI prompts. Unlike phishing or ransomware, there's no alert. But over time, you lose control of strategic and sensitive data." Andrade sees Shadow AI as a structural governance breakdown, not a fringe behavior problem. The pattern is consistent across both the Brazilian and U.S. markets, and it starts with a basic misalignment: security teams still try to control systems, while employees work within fast-paced workflows focused on speed and delivery.

Adaptation, not rebellion: "When organizations fail to keep pace with this reality or lack visibility into it, employees naturally find their own solutions," Andrade says. "This isn't rebellion. It's adaptation. The real problem arises when this adaptation involves sensitive data being entered into AI tools without any guidance, control, or proper protection."

In the shadows: Sales, Marketing, HR, and Legal are the most active users, inserting internal, personal, and strategic information into prompts for content creation, analysis, and decision support. On the technical side, IT and engineering teams use AI for code generation, code review, and troubleshooting. "While these teams tend to have greater technical awareness, they also have a stronger ability to bypass formal controls," Andrade notes. "The biggest risk isn't who uses AI the most, but who uses it with sensitive data and without clear guidance."

The distinction between Shadow AI exposure and traditional threats is critical for how security leaders prioritize response. Phishing and ransomware trigger visible incidents. Shadow AI data leakage happens gradually and without clear alerts. Small fragments of information, when combined over time, can expose strategic context, personal data, contracts, and internal architecture. Andrade also flags a risk that rarely enters the conversation: how this information may be reused across providers, integrations, and third-party ecosystems that organizations have never mapped.

Ban vs. plan: "Banning AI use only creates an illusion of control," Andrade says. "Effective governance requires treating AI as a corporate capability, with clear rules, approved tools, and well-defined responsibilities. When organizations provide practical guidance and secure paths for usage, employee behavior naturally adapts. Governance stops being a blocker and becomes an enabler."

The ownership gap: The deeper failure, Andrade argues, is that most organizations still cannot answer basic questions about who owns AI initiatives, who approves integrations, or who is responsible when an incident occurs. "Without this clarity, any policy remains theoretical," he says. "Another key issue is the third-party ecosystem involved in AI usage, which is often unmapped."

The trajectory is clear. AI will deepen its integration into systems, workflows, and operational decisions. That makes the risk harder to isolate and the consequences of inaction more expensive. Andrade frames Shadow AI as a maturity test, not a technology problem. Organizations that establish visibility, ownership, and auditable data flows can balance productivity and security. Those that do not are not simply deferring risk. They are absorbing it. "Without visibility into where data flows, effective governance simply doesn't exist. Managing Shadow AI is less about technology and more about clarity, accountability, and the ability to demonstrate control."