All articles

Enterprise Security Leaders Return to First Principles to Cut Through AI Marketing Hype

Ben Rothke, Senior Information Security Manager at Experian, discusses how organizations can use disciplined self-assessment to cut through marketing and successfully integrate AI into their security infrastructure.

"AI is extraordinarily powerful, but it’s still in the extremely early stages. The key is to start small, understand your environment, and grow from there."

Cybersecurity’s oldest problems—patching, identity management, and code security—remain its most persistent risks, creating openings for everything from ransomware to nation-state actors targeting valuable intellectual property. Fueled by massive investment and overhyped capabilities, artificial intelligence is a massive topic of discourse in the field. Many organizations are finding a gap between the promise of AI and its current reality.

We spoke with Ben Rothke, the Senior Information Security Manager at Experian, about how security leaders can navigate the hype and reality of AI. As a long-term security manager and consultant, founding member of the Cloud Security Alliance, and the author of Computer Security: 20 Things Every Employee Should Know, Rothke has experienced his fair share of hype cycles. He is clear, however, that leaders ignore the marketing and return to a disciplined, first-principles approach.

“AI is extraordinarily powerful, but it’s still in the extremely early stages. The key is to start small, understand your environment, and grow from there," says Rothke. But what does it mean to follow a disciplined approach? Rothke suggests that AI success will ultimately result from an attention to detail focused on quality data and a clear understanding of organizational needs.

For many security teams, the first thing a poorly implemented AI delivers is just more noise. SOCs are already wrestling with alert fatigue, and untuned AI tools risk making the signal-to-noise problem worse by generating a flood of false positives. This is a common reason many AI adoption efforts struggle to deliver value: they challenge the assumption that more data is always better. Rothke says that the goal should be to pursue better, more relevant intelligence rather than massive data streams. Getting to that targeted intelligence often depends on deep operational discipline, because the limitations of enterprise AI tools reveal them to be intricate systems that demand continuous human oversight.

Signal over noise: Untrained AI is a problem for Rothke, who sees massive streams of unstructured data as a roadblock for security professionals. "The problem with AI is that it can inundate you with information that isn't actionable. That has always been the challenge in security: finding a little good, actionable information instead of massive amounts of data. What you want is that targeted amount of intelligence, not petabytes of noise."

Handle with care: Untrained AI is a problem, but equally problematic is AI that isn't consistently maintained and configured. Rothke compares AI to a Boeing 707 cockpit full of dials and instruments that call for continuous training and configuration. "AI is not a tool that can be set up and then forgotten; it’s the exact opposite. Many AI failures happen because organizations don't grasp the hands-on effort needed. If you are willing to put in that work, the returns will be just as great."

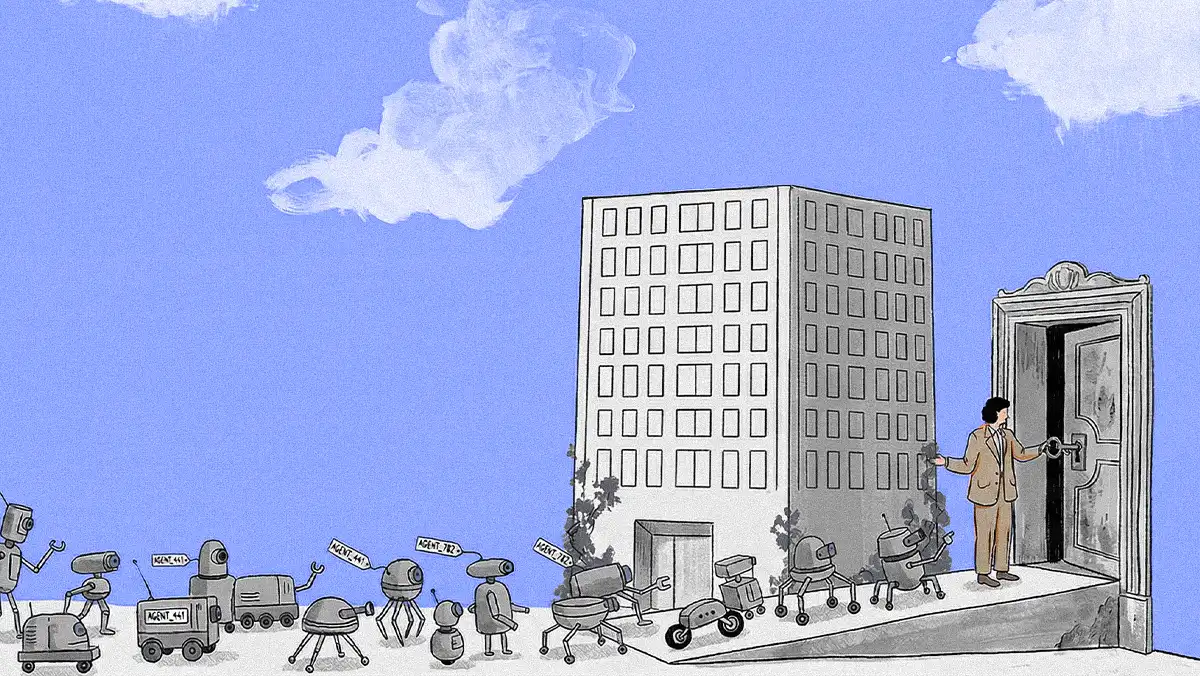

No less important is the relative maturity of modern AI and how it operates in a global risk environment. Rothke says trust should be approached as a nuanced question of governance rather than a simple "do or don't" choice. That reality points to the need for a framework that starts with simple limits and scales up to match an agent's autonomy with the potential impact of an error.

A teen's credit card: AI brings risk, just like any other technology, but Rothke emphasizes that it's up to security leaders to set clear limits to balance that risk with reward. "Governing AI is like giving a credit card to a teen. You wouldn't provide one with no limit because they don't understand risk; you give them one with a strict limit and restrictions. You must take that same slow, deliberate approach with AI, starting with tight controls. It's all a matter of understanding the risk associated with the decisions you allow the system to make."

This highlights a central theme: there's often a disconnect between the hype of an AI solution and what an organization might actually need. Success with AI often hinges on disciplined planning and execution, and includes selecting a partner capable of a "deep, customized analysis," an approach that distinguishes them from firms with mere "glorified reseller agreements." In his view, the most foundational work happens when an enterprise takes full account of their architectures, problems, and required solutions—that is, leading with a clear understanding of needs rather than being led by marketing.

The buck stops here: Rothke describes a good AI partner as one that can provide a diagnosis of how AI can apply to an organization's needs. But he's also clear that it's up to the organization to provide a clear, sober map of problems, systems, and needs. "If you're looking for a partner, they need to understand your environment, what the pain points are, and how AI can help you. You need a detailed business case: 'Here's our problem. Here's how we're going to implement it.' And once you take a document like that, it's like a good set of architectural plans. You hand that off, and a good AI partner can build it."

Rothke argues that the thorough pre-planning most teams skip is precisely what separates a successful implementation from a disappointing one. "If you simply ask a contractor to build a mansion without providing any plans, the project will fail, and you will be disappointed," he concludes. "That's how a dream house gets built, and it's the same with IT."