All articles

To Alleviate Human-AI Agent Bottlenecks, Experts Build Context- and Cryptography-Driven Governance Layers

William duPont, CEO and Co-Founder of Applied Identities, describes the operations gap between autonomous agents and continuous human management, offering a new way forward through context-driven governance layers backed by cryptographic verification.

Make The Security Digest one of your go-to sources on Google

There is a gap that sits in the middle of this that nobody has really solved for. Security tools need to know what they’re governing, and in almost all cases, that hasn’t been defined in a systemic or trackable way.

Enterprise AI teams face an "activity without outcome" problem: companies buy agentic tools for massive efficiency gains, only to end up with a tangled mess of localized apps. This reality presents a challenge for security teams seeking to address the emerging gap between agentic governance and tooling. Add that to the fact that enterprises are increasingly risk-averse, wanting to tether supposedly autonomous systems to manual, minute-to-minute human oversight. As would be expected, this approach is an incredible drain on the performance these organizations would expect from AI.

William duPont has spent over thirty years watching organizations stumble through these exact types of technological growing pains. As a former Client Partner at NTT DATA and current CEO and Co-Founder of Applied Identities, duPont has guided Fortune 500 brands through successive digital transformations. He sees a direct parallel between the early internet and today’s AI and cloud infrastructure in how they shape governance of new technologies and their adjacent departments. In the earliest days of the internet, no one knew if marketing, IT, or operations owned the corporate website. Today, companies struggle with who owns AI and infrastructure.

"There is a gap that sits in the middle of this that nobody has really solved for. Security tools need to know what they’re governing, and in almost all cases, that hasn’t been defined in a systemic or trackable way," says duPont.

The missing middle layer might explain why so many pilots report technical success alongside low user satisfaction. Many organizations still treat digital agents like standard software rather than a new type of workforce. Microsoft tools enable teams to spin up functional agents with minimal effort. The tools work, but the context often falls flat. That bottleneck recently prompted duPont to build the exact architectural layer he believes the market is missing. This layer focuses on a behavioral specification framework that lets humans define guardrails up front while enabling agents to operate autonomously within strict bounds, with guardrails verified cryptographically in real time.

Generic in, generic out: Organizations are shipping pilots at pace, duPont says, but the pace masks a deeper mismatch. “Organizations are deploying generic tools against generic use cases in a generic way. The tools are designed to deliver a certain function, and they do deliver on that function, but they don't deliver it in a way that is contextually accurate for the organization that it's operating within.”

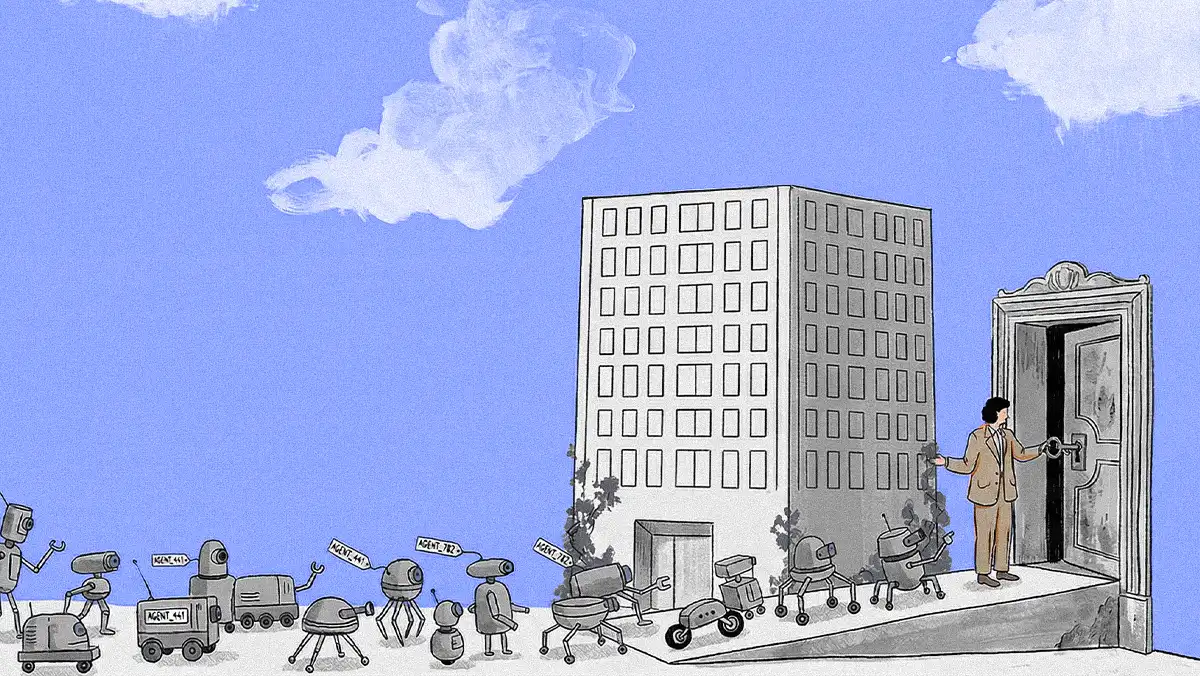

HR for algorithms: The gap, in his view, is that enterprises are not treating agents the way they treat any other new hire. “Think of an agent workforce. With humans, you onboard them, you train them, you give them the tools they need to do their job, but you also teach them about the culture. You manage them, measure their performance, mentor them, and correct them as needed. That's not really happening with the agentic AI at this point because the infrastructure has not been put in place.”

Easy to build, hard to raise: "The cost and skill required to spin up an agent that actually works as designed now is pretty low. A lot of our clients are Microsoft. With a little skill and time, they can spin up an agent, and it will do what they designed it to do. But was it designed to do the right thing? And is it operating within a governance framework that is consistent, that's scalable, that's trackable?"

DuPont makes it clear that dropping an agent into an environment without that organizational context introduces friction that technical metrics rarely capture. To bridge the divide, many organizations rely on manual intervention, keeping humans at the switch to approve or block actions. For use cases where speed is the whole point, that approach hits a wall. From an oversight perspective, he points out that teams may need to rethink how safety and autonomy coexist without choking efficiency.

To fix this, duPont suggests fundamentally rewiring the way security platforms communicate with AI agents. Legacy tools approach the problem from a native security mindset. Adapting them for agentic workflows, he argues, requires building a behavioral specification layer in front of them so they understand precisely what they are governing. For sensitive use cases, especially those involving high-value information or, certainly, financial transactions, duPont pointed to hierarchical models similar to Anthropic’s constitution as one way to scope authority from the top down. Without that foundational context, tracking behavior or accounting for drift over time becomes significantly harder.

Flying blind: Security vendors are solving the problems their platforms were built to address, duPont says, leaving a gap nobody is filling. “The security folks are coming with a security mindset and a security perspective and solving the types of questions that their platforms or offerings are designed to solve. You certainly need the tools for security, but those tools need to know what they're governing. In almost all cases, that hasn't really been defined in a systemic, trackable, or enterprise-deployable manner.”

Drift happens: Even a well-designed governance structure decays without continuous measurement. “You need that SOC layer. It is a critical part of the overall architecture. What needs to come before that is a behavioral specification layer designed by humans but not implemented on a minute-to-minute, day-to-day basis. Are you actually able to see the performance and behavior of agents over time and measure them in a tangible way, because drift is a real thing?”

Under his proposed model, humans would still define the guardrails upfront, while the system enforces them automatically at scale. He outlines an architecture that combines universal governance, per‑agent behavioral specifications, and cryptographic verification, so agents can act at machine speed without constantly needing a human to hit "approve." For duPont, the gap between human-developed rules and guardrails and agent autonomy is solved by a layered approach that combines governance, specification agents, and cryptographic verification. Across these layers, human operators can ensure that agents operate within the parameters they set.

Ultimately, however, this isn't just an architecture problem—it's an org chart problem. Much like the dawn of the corporate website, agentic AI raises questions about ownership, responsibility, and collaboration that rarely map cleanly to existing departments. For duPont, the practical takeaway is that deploying agentic AI requires treating digital agents with the same seriousness as a human workforce. Shared guardrails, clear responsibilities, training, and ongoing management must span security, IT, HR, finance, and operations for these tools to actually do their job.

Rules of the road: Enterprise governance needs both universal rules and per-agent flexibility, duPont explains. “You have to have governance docs in a system that can scale to enterprise level, which means there are some invariables that apply across all agents. Then you have some flexibility in deploying individual agents that require behavior specifications appropriate for the task. If they're dealing in transactions, then your risk level needs to be dialed very low, and you do not want that agent to be innovative. But you may have other things where you want them to be more autonomous.”

Math over manual: Policy without proof is not enough, duPont argues. “You have to have cryptographic verification to ensure that they're operating within the parameters that the humans gave them. It needs to be in a platform implementation, because you cannot do one-off implementations on an enterprise-wide basis.”

For duPont, the practical takeaway is that deploying agentic AI requires treating digital agents with the same seriousness as a human workforce. Shared guardrails, clear responsibilities, training, and ongoing management must span security, IT, HR, finance, and operations for these tools to actually do their jobs. The organizations that get this right, he suggests, will be the ones willing to think past their own functional lens and recognize that the problem is bigger than any single team's remit. "Just as you would think about all of the elements being required for a human workforce to be successful, that's HR, that's IT, that's training, it's broader and requires more perspective than any one group or team has had to bring to the table in a long time."