All articles

Enterprises Lock the Front Door for AI Agents but Leave the Data Vault Wide Open

Arvinda Rao, Director of Compliance at Veeam, outlines the challenges posed by AI agents, evolving attack surfaces, and the limits of traditional, identity-based security.

Authentication alone isn’t enough—an agent can pass every check and still misuse sensitive data if its behavior isn’t monitored.

Everyone wants autonomous AI workflows, but enterprise AI adoption is stuck in proof-of-concept purgatory. That's not because identity and authentication systems have failed, but because of an unaddressed problem of data-layer exposure and unpredictable agent behavior. As organizations experiment with AI agents that can take action on their behalf, they encounter security questions that traditional tools were not designed to answer. Traditional security asks, "Who are you?" But when dealing with autonomous agents vulnerable to malicious prompt injections and malicious attacks, the more urgent question becomes, "What are you doing with this data?"

We spoke with Arvinda Rao, Director of Compliance at Veeam. As a Certified AI Algorithm auditor with over 16 years of cybersecurity experience and an IAPP AIGP certification, he's focused on applying granular data controls to AI agents to prevent them from becoming internal vulnerabilities.

As organizations weigh the pros and cons of their architectural decisions, a central debate has emerged over how to structure permissions. Many security leaders view a single, macro-level assistant across an agentic enterprise as a major vulnerability. Since current AI models generally lack human intuition regarding confidentiality, broad access can quickly create new paths for insider threats. “Authentication alone isn’t enough," Rao says. "An agent can pass every check and still misuse sensitive data if its behavior isn’t monitored.”

One bot to rule them all: Rao sees a single, organization-wide agent as one of the most dangerous deployment patterns in enterprise AI. "One god-level agent across the organization is a huge risk. What kind of data access the agent will have, and then monitoring that intended behavior, is the key to product adoption." When a single agent has access to HR or finance datasets, confidential information can leak across departmental boundaries without any malicious intent required. "If I build an agent to answer queries and give it access to HR or finance datasets, it can expose information I am not supposed to know."

Sticking to the script: To reduce that risk, many teams deploy smaller, purpose-built agents designed to answer narrow categories of questions with read-only access. But even at the micro level, those agents require strict data boundaries and regular access reviews to prevent scope creep. Rao points to commercial sales environments as an example of safe, bounded agent use in which the value is clear, and data exposure is limited. "If you take a car showroom like Toyota, with thousands of locations and sales agents, they previously had to go through pages of documentation to understand a car's specifications. Today, they can ask an agent and get the answer and specific technical guidance straight away. But that is the limit of its intended purpose. It is not supposed to give out the next pipeline of cars or internal design mechanisms."

Just-in-time (JIT) access gained popularity by supporting fluid workflows for human developers. But when applied to autonomous reasoning systems, compliance professionals often counter that human monitoring struggles to scale alongside on-the-fly data provisioning. For many enterprise programs, the safer alternative is to rely on traditional risk profiling and static permissions as part of routine AI agent governance.

Pumping the brakes: Rao argues that the fundamental challenge with dynamic access is the inability to define and monitor intended use at the speed at which agents operate. "The biggest question is how we monitor and define the intended use. That is still the key thing. It's about monitoring and defining that intent every time access is highly dynamic," he says. Rather than chasing dynamic access models, Rao advocates for a more conservative architecture grounded in controlled data ingestion. "The safest option we are seeing is taking a pretrained version of an LLM as a backbone, ingesting the data, and making sure it is secure. Data is changing every day, but giving an agent so much dynamic access becomes too risky for enterprise adoption." Rather than deploying on-the-fly access for broad, enterprise-wide agents, Rao advises reserving it for small, closed-loop pilots where the scope is limited, and behavior can be closely observed.

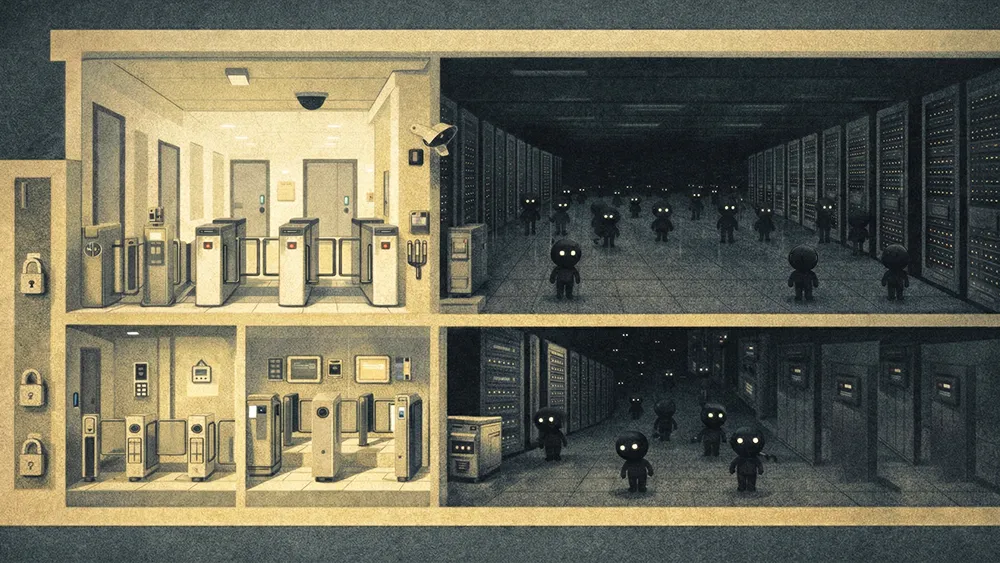

Relying heavily on legacy identity management models like OAuth or token delegation reveals practical limits when applied to autonomous agents. Those tools still matter for establishing who gets into a system, but they were not built for non-linear, agent-chained workflows. Security architects often point to a growing capabilities gap in how legacy architecture handles these tasks. Identity systems evaluate access, not intent. Once an agent clears the initial authentication gate, a manipulated prompt can redirect its actions away from the intended outcome without tripping traditional alarms.

Swiping the corporate card: He illustrates the point with agents who have access to financial instruments, in which a compromised agent could exploit legitimately granted permissions. "There are agents we can use for travel booking, in which case the agent will have access to my credit card. You don't know whether someone has manipulated the agent into doing the shopping for them. Even with guardrails, unless you monitor the pattern and behavior of the agent, you won't catch it because it already passed the authentication phase and moved on." Moving from "who gets in" to "what they do once inside" pushes teams to continuously observe agent behavior. To keep systems manageable without destroying the speed advantages of AI, Rao recommends modeling agents inside the identity fabric as if they were human employees with delegated authority, subject to granular, frequently revalidated access controls.

Babysitting the bots: To scale that oversight, the industry is actively building automated defenses. These tools screen prompts and outputs for policy violations, bridging the gap between static identity checks and fluid AI behavior. Drawing on how enterprises already manage human access, Rao advocates for treating agents as delegated users with scheduled re-authentication and behavioral alerting. "I would treat the AI agent as a delegated user, like an assistant. I would implement periodic authentication, ensuring access permissions are revisited and audited at a defined frequency. Even now, we ask employees to go through that authentication process once in three days or once a week."

Looking ahead to what failure will look like in the real world over the next year, Rao focuses less on the identity layer and more on data exposure. Identity tooling is relatively mature, but data access for agents often lacks the same level of granularity and review. In his view, over-permissioned data systems with no restrictions will break first—and when they do, they will trigger cascading behavioral risks that amplify enterprise exposure.

Cracking the vault: Rao warns that unresolved data-layer vulnerabilities will not stay isolated—they will compound with behavioral exploits to create systemic risk. "If the data layer is not addressed, then I'm sure, after some time, you will have a behavior issue coming in, which could create a double whammy effect. Data plus the behavior effect, and the risks will explode."

That foresight is exactly why many organizations currently prioritize data boundaries before deploying agents at scale. Trust in AI agents will come from defining intended behavior, enforcing strict data boundaries, and continuously observing agent actions in real time. By starting with clear, narrow scopes for what each agent can see and do, teams layer on behavioral monitoring rather than relying on identity alone to keep autonomous systems in check. "I think behavior is the next wave of attacks if the data layer is not addressed. It will have a double whammy effect. Data, plus the behavioral effect, and the risks will explode."