All articles

CISOs Move to Embed Governance and Identity Controls Into AI Agents as Autonomy Expands

Michael L. Woodson, Fractional CISO and Chief Cybersecurity Strategist, warns that AI agents operating as unsupervised digital employees are outpacing the tools designed to govern them.

The bot is the company. It's learning your business, taking actions autonomously. So where's your control? Governance has to be built in, and humans must stay in the loop to cross-validate decisions.

AI agents are no longer waiting for instructions. They are registering as vendors, executing transactions, and making operational decisions with no human standing between intent and action. For enterprises still governing these systems with quarterly reviews and static risk assessments, the gap between agent capability and institutional control is growing fast.

Michael L. Woodson is Fractional CISO and Chief Cybersecurity Strategist at Onyx Spectrum Technology, a professional services firm specializing in cybersecurity compliance, legacy system support, and technology consulting for government and private-sector clients. With over 20 years of experience across financial services, public transit, hospitality, and federal law enforcement, Woodson advises boards and executive teams on AI governance, supply chain cyber resilience, and enterprise risk strategy.

"The bot is the company. It's learning your business, taking actions autonomously. So where's your control? Governance has to be built in, and humans must stay in the loop to cross-validate decisions," says Woodson. The scenario he describes is not speculative. As enterprises accelerate AI adoption, agents are already creating new categories of security risk that existing tools cannot see, measure, or contain.

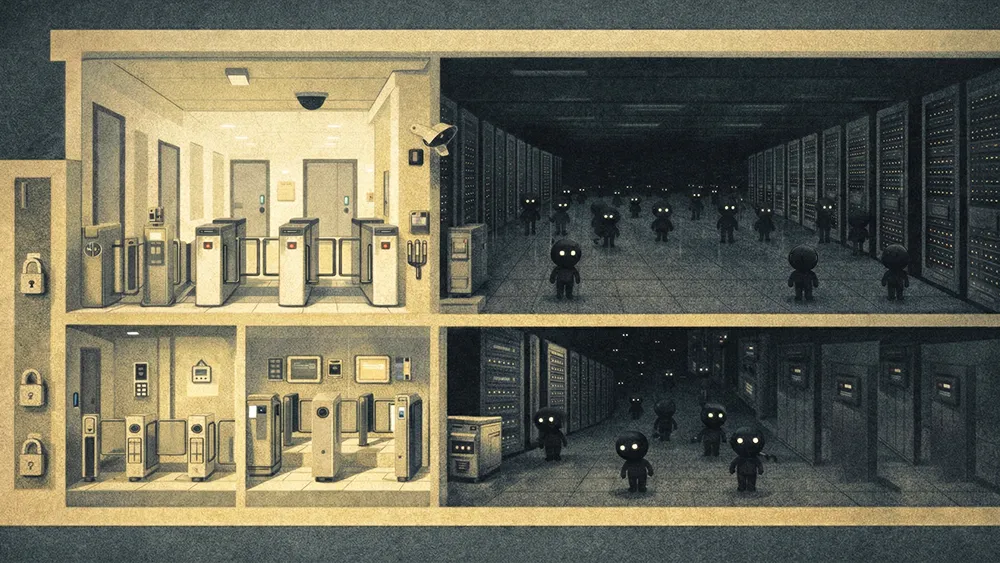

Digital employees, zero supervision: "The bot is the person. The bot is who you entered into contract with. No middleman. You launched it, it's learning your business, taking input from different sources and saying, 'I'm going to make your company better.' So where's your control?" Woodson calls these agents digital employees operating with no supervision, a framing that makes the governance deficit impossible to ignore. Without a trust layer built into the enterprise AI stack, there is no mechanism for accountability.

Governance built into the bot: Woodson argues that governance cannot remain an external overlay. "There has to be a governance model in the bot so it can govern itself," he says. That means defining acceptable autonomy levels, setting risk tolerance for AI-driven decisions, and aligning agent behavior with frameworks like NIST AI Risk Management and ISO 42001. He calls this practical governance: CSOs and CIOs learning what agents actually do, understanding outputs, and knowing the impact before scaling autonomy.

Nonhuman identity management: Treating agents as vendors means treating them as identities. "You've got to authenticate it. You've got to make sure it's not a rogue agent acting in your world," Woodson says. That includes least-privilege access, lifecycle management for onboarding and offboarding, and continuous validation that the agent's identity and actions remain aligned with enterprise intent.

The agentic SOC: Just as enterprises operate security operations centers for network threats, Woodson sees a parallel forming for autonomous agents. "Someone's going to have to monitor that. Continuous monitoring. Just like we have for geopolitical risks, you're going to need an agentic SOC," he says. The CSO's role in this model shifts to defining the architecture, setting the criteria for acceptable risk, and maintaining human-on-the-loop oversight rather than human-in-the-loop control.

The deeper concern, in Woodson's view, is that the tools themselves are broken. Security stacks built on three-year investment cycles were designed for a threat environment that no longer exists. As autonomous agents introduce new classes of data breaches and decision failures, those tools lack the mechanisms to detect drift, flag anomalies, or recalibrate agent behavior against original business intent.

"We've handed over trust to AI agents. We've handed over how they manage risk," Woodson says. "If an agent acts in a way that doesn't align with what we originally initiated, the tools need to flag it and recalibrate. That is the issue. That's what has to happen."

The question facing every enterprise is no longer whether to adopt AI agents but whether governance, identity, and security infrastructure can keep pace with systems designed to act on their own. For Woodson, the answer starts with leaders who refuse to outsource understanding. "You can't delegate this. CSOs and CIOs have to be hands-on. They have to know what these agents do, what the outputs are, and what the impact is. That's practical governance."