All articles

Security Leaders Tie AI Agent Risk To Architecture, Not After-The-Fact Controls

Independent Security and Compliance Consultant Raj Karra on AI agents as the new threat perimeter and how experts must evolve to integrate AI into security while managing the inherent risks.

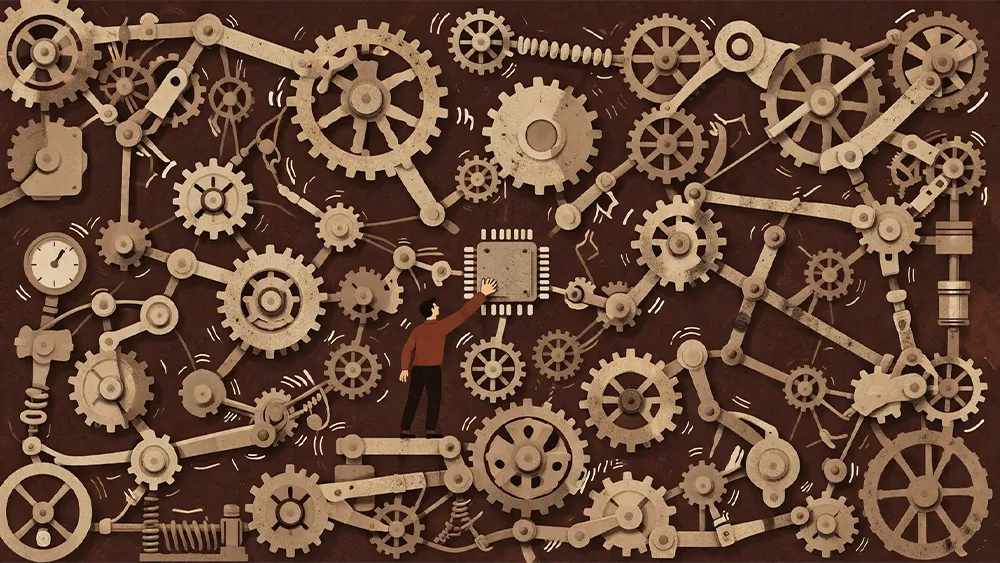

Good security starts with good system design and a clear surface area. If you don’t know what your surface area looks like, securing it becomes difficult. That’s where most teams fall short before an audit even begins.

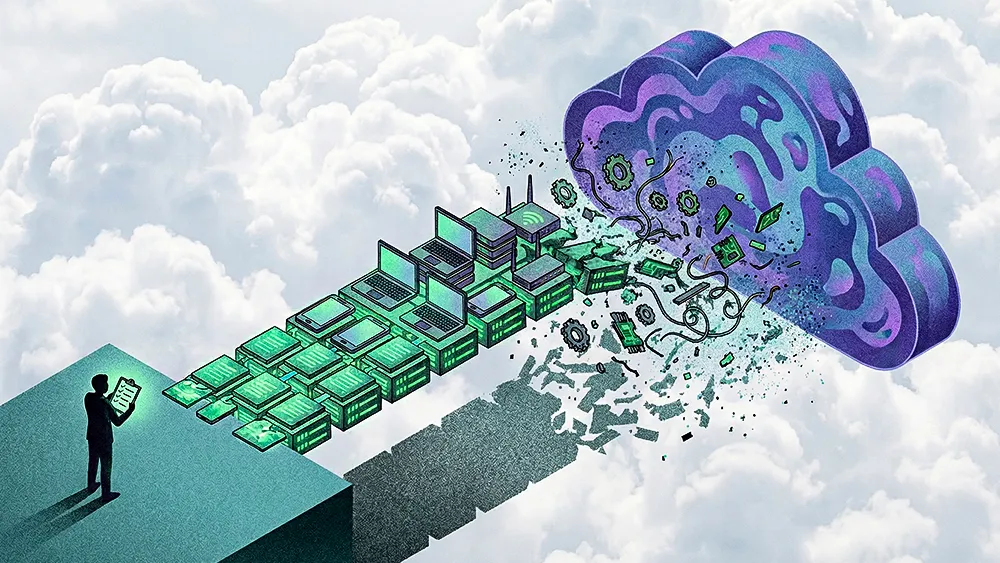

Everyone is rushing to plug autonomous AI agents into their tech stacks, often before they even know how their own plumbing works. Operating in the dark means AI adoption outpaces the security guardrails around it, making it harder for teams to understand and document their exposure. For many practitioners, security, auditability, and AI integration prove more sustainable when they emerge as natural outcomes of sound system architecture rather than reactive fixes or compliance theater.

We spoke with Raj Karra, a software engineer and independent security and compliance consultant who helps fintech and healthtech startups map their data flows to pass enterprise security reviews like SOC 2 and HIPAA. Building on his time as a software engineer at Apple and holding multiple patents in the privacy and security space, Karra approaches compliance as a systems engineering problem. “Good security starts with good system design and clear surface area," says Karra. "If you don’t know what your surface area looks like, securing it becomes really hard. That’s where most teams fall short before an audit even begins.”

Feature, not a bug: Karra points out that organizations first need a clear picture of how their environments are actually put together. “It starts at the point when you design the system with clear data flows, make the boundaries clear, and then start by enforcing least privilege and logging at key control points where data moves. Once you go from there, compliance just becomes documenting a well-structured system.” The most dangerous gaps tend to cluster where internal systems meet the outside world. “You have your nodes, such as your API endpoints and databases, and then you have edges that connect them. Failure points cluster at these edges or boundaries.”

In fast-moving CI/CD environments, a single release can move the system far away from what an auditor saw on paper. The profession is starting to grapple with the speed of modern deployments, as the AICPA pushes toward audit and assurance greater than SOC 2. At the same time, platforms like Vanta are automating much of the evidence-gathering work. That automation frees engineering teams to focus more of their time on architecture rather than taking screenshots.

Blink, and it's gone: Baseline SOC 2 audits measure real controls and provide fundamental value, but Karra explains they remain static snapshots. “SOC 2 does measure real controls, so it's better than nothing. But ultimately, it's a point-in-time audit. You can pass your audit and then a week later ship three new integrations and a new feature, and all of a sudden your architecture drifts from what was compliant and certified by the auditor to something that's not compliant.” To keep that drift in check, Karra advocates for rigorous internal processes over buying new tools. He points to everyday engineering work, such as version upgrades, as the right place to anchor continuous review.

Show the receipts: The question is not just whether a change was made, but how teams demonstrate that it did not quietly break an existing control. “Perhaps I can demonstrate that we upgraded this Apache version and didn't find these vulnerabilities. And from our existing controls at this audit point in time, the actual effect of these controls hasn't changed.”

The legacy trap: Teams running on long-lived, stable infrastructure naturally view new processes as a disruption rather than an improvement. “When you have a lot of legacy infrastructure, it comes with the mindset that we've been doing it this way for a while, and there's that inertia that they have to fight. Why change it now?”

That organizational inertia is now colliding with the push toward agentic SOCs. Karra does not treat AI agents as quasi-employees; he treats them as untrusted nodes that need clear scopes and tight interfaces. Because AI agents execute tasks autonomously, researchers observe that they can behave a lot like malware if their access isn't properly scoped across environments.

Ctrl-Z security: Governing this autonomy tends to benefit from a hybrid human-AI SOC model. According to Karra, machines handle the high-volume, low-latency work, while humans step in where the blast radius is high. “If you have an action that isn't damaging, then it's not a problem if an AI agent does it. But then you get mission-critical tasks, such as deleting data, that's when you want a human in the loop, and you want to have controls and safeguards in place.”

Babysitting the bots: Karra sees work moving toward higher-skill oversight roles, an evolution of the security team where experts track gaps in agentic AI security capabilities. “When you have manual tasks, you'd have fewer analysts because that's where agentic AI shines. But you'll also need more engineers to oversee it. You can't just deploy these systems with zero oversight or humans in the loop and expect it to just magically work.” But how do you actually enforce that human oversight? It comes down to policy that's hard-coded into AI rules.

Talk is cheap: Karra is clear that writing a corporate policy is only the starting line. For many teams, moving toward outcome-based security often involves translating abstract rules into hard technical boundaries. “Ultimately, it comes down to actually enforcing the policies at these control points instead of just having the policies purely on paper, regardless of whether it's human or agentic.”

Reports like Darktrace’s 2026 State of AI Cybersecurity and Human Security’s AI Traffic and Cyberthreat Benchmarks highlight how large language models and automated agents introduce new exposure risks simply by virtue of their broad access. These reports and Karra's insights point to a security landscape where AI agents are at the far edges of exposure, interacting with users and with one another in ways that experts are only just beginning to understand. “When it comes to security, it's a continuously evolving field. Attackers improve every day, and technology does too. A lot of security problems often come down to best practices: good system design, good architecture, and good threat modeling.”