All articles

To Trust AI Decisions, Enterprises Require Owned And Verified Data Inputs

Rodrigo Baptista, Fiscal Auditor for Rio de Janeiro, explains why AI decisions fail when input data lacks ownership and accountability upstream.

How can I make a decision if I don't know where the information generated by AI is coming from? If there's no one responsible for that data, then I can't trust the decision.

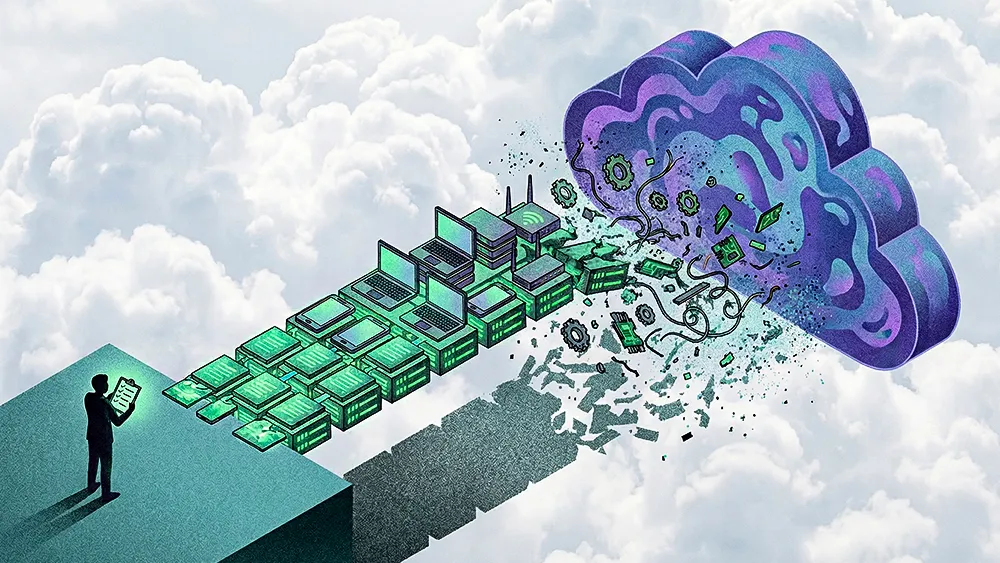

While the C-suite spends time chasing agentic systems and adjusting their executive priorities in 2026, a more fundamental problem is taking root beneath the surface. AI is optimized for speed and synthesis, but decision-making requires traceability and responsibility. Artificial intelligence models are generating conclusions based on data that no human has actually vouched for. In the push to eliminate manual work, organizations are feeding their workflows unverified information that lacks a clear owner and then trusting the outputs as though the inputs were sound.

Rodrigo Baptista treats the lack of clear accountability as a practical operational hurdle rather than a novel tech theory. As a Fiscal Auditor for Rio de Janeiro, he has spent more than 13 years overseeing inspection teams of over 500 auditors and coordinating tax operations for major mega-events like Rock in Rio. He operates in environments where untraceable information disrupts operations, driving his push for a stricter upstream data governance model. "How can I make a decision if I don't know where the information generated by AI is coming from?" says Baptista. "If there's no one responsible for that data, then I can't trust the decision."

Ghost in the machine: For someone used to tracing every figure back to a responsible person, it raises a fundamental question for Baptista: how can you rely on information without knowing where it came from? "I found myself in a situation where I had to solve a family problem, and I saw that I had this AI tool that I could use. But the problem with this tool was that I had no idea where the information was coming from. How can I decide? How can I make decisions based on that information, that data?" The real failure point is upstream, where unverified information enters AI systems without ownership or accountability. Vendors often want the AI to police itself, using internal flags to catch errors without slowing down projects. But Baptista argues that it is already too late.

Show your receipts: His response to that friction is simple: treat digital inputs the way auditors treat financial records. He wants every meaningful piece of data feeding a system to have a clearly identified human sponsor. "We need to update and take governance over the information before it goes into the AI. Not after," Baptista explains.

No sponsor, no service: At the core of his proposed framework is a simple checkpoint: if no named human stands behind a piece of data, it should not enter the system at all. "How can we take the information as correct if we don't have a human being responsible for that? So that's the first thing about my system. If you don't have a human being responsible for that information, the information is considered invalid."

For many leaders, the inability to trace information back to a named owner makes it harder to fully trust the conclusions generated by these systems. That underlying hesitation is becoming obvious as companies work through current privacy and governance benchmarks and look for ways to build customer confidence in AI outputs. Building trust doesn't require waiting for a smarter algorithm; it simply requires implementing a human sponsor requirement before the data is ingested.

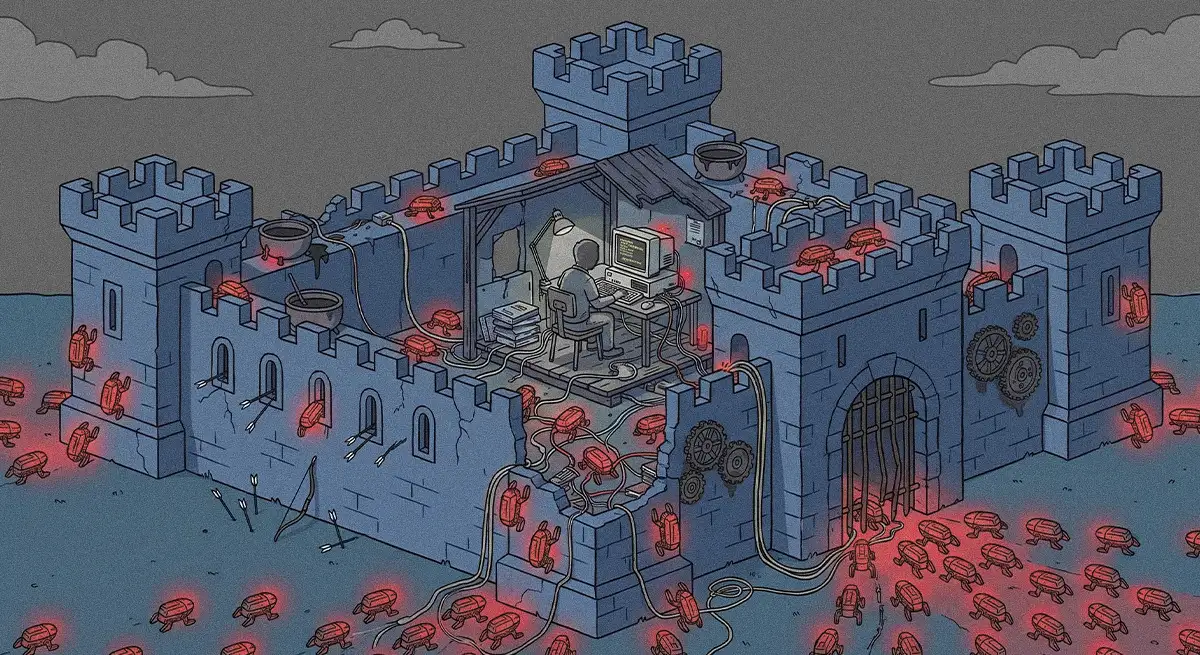

Weak data governance is now treated as a major liability. Regulators are beginning to circle, and some internal security teams are scrambling to lock down AI agents acting on flawed internal data. To manage that exposure, organizations are experimenting with governing autonomous agents, treating cybersecurity as a core pillar of enterprise AI programs, and building responsible frameworks into their use cases from the start.

Regulate or be regulated: Concerns about unregulated shadow AI and who should be accountable for AI security are landing on board agendas. The market is moving toward a period of heightened regulatory scrutiny of AI systems. Organizations that delay work on basic data governance invite preventable operational friction and regulatory headaches. Baptista sees the question of accountability as an unavoidable fork in the road: either companies take ownership of the data feeding their AI systems, or governments will step in and impose controls. "We are already at a point of no return where we are using AI. We have to decide whether we want more government control or more responsibility from companies. If they want to take care of the information, they get to make those decisions."

Responsibility is a requirement for achieving real return on investment from automation. In some fast-moving rollouts, human checkpoints can often be viewed as a drag on speed. Yet moving fast without trust in the underlying data turns into an operational liability. The current "AI confidence gap" is driven by unverifiable outputs built on ownerless inputs. By returning the focus to human accountability at the data level, companies can restore trust and safely scale their tech stacks.

For Baptista, this lack of confidence is the true limitation to the widespread adoption of AI. "Sometimes I listen to people talking about the bubble of the AI, but a great part of it is the lack of confidence people have when they don't have governance. If we can give a layer of confidence to these people, if we begin to take the responsibility for the decisions we make, we can go further and take more advantage of AI," he concludes.